The financial services industry, particularly banking, stands on the precipice of a transformative shift driven by the rapid advancement and adoption of agentic AI. While AI has been integrated into various aspects of banking for years, primarily in areas like fraud detection and algorithmic trading, agentic AI represents a paradigm shift. It moves beyond simple automation and predictive analytics towards autonomous, decision-making systems capable of acting on behalf of the institution. This evolution, while promising significant gains in efficiency and customer service, simultaneously exposes deep-seated vulnerabilities in the core infrastructure of banks, particularly concerning security, data governance, and decision-making protocols. The urgency to address these vulnerabilities is paramount, not only to mitigate risks but also to unlock the full potential of agentic AI in a secure and responsible manner. The failure to do so could lead to catastrophic consequences, ranging from massive data breaches and financial losses to regulatory penalties and reputational damage.

What's Happening: Agentic AI Exposes Banking Weaknesses

Agentic AI, unlike traditional AI, is designed to perform tasks autonomously, learning and adapting as it interacts with its environment. In the banking context, this could involve managing customer accounts, processing loan applications, or even executing complex trading strategies, all with minimal human intervention. However, this level of autonomy necessitates robust security measures and clear data governance frameworks, areas where many banks are demonstrably lagging.

The integration of agentic AI is revealing several critical weaknesses. Firstly, existing cybersecurity protocols are proving inadequate to protect against sophisticated attacks targeting AI systems. Hackers are increasingly focusing on "AI poisoning," where malicious data is injected into the training data of the AI, causing it to make flawed decisions. The autonomous nature of agentic AI amplifies the impact of such attacks, as a compromised AI can rapidly propagate errors and vulnerabilities across the entire system.

Secondly, data governance is emerging as a significant challenge. Agentic AI relies on vast amounts of data to learn and make informed decisions. However, many banks struggle with fragmented data silos, inconsistent data quality, and a lack of clear ownership. This can lead to AI systems making decisions based on incomplete or inaccurate information, resulting in biased outcomes or regulatory non-compliance. Furthermore, the use of sensitive customer data by AI raises serious privacy concerns, requiring banks to implement stringent data protection measures in accordance with regulations like GDPR and the California Consumer Privacy Act (CCPA).

Thirdly, the delegation of decision-making authority to AI systems raises complex questions about accountability and transparency. When an AI makes a flawed decision that results in financial loss or regulatory violation, it can be difficult to determine who is responsible. The lack of transparency in AI algorithms, often referred to as the "black box" problem, further complicates matters. Banks need to establish clear lines of accountability and implement mechanisms for monitoring and auditing AI decisions to ensure compliance and ethical behavior.

Industry Context: A Race for AI Dominance Amidst Growing Risks

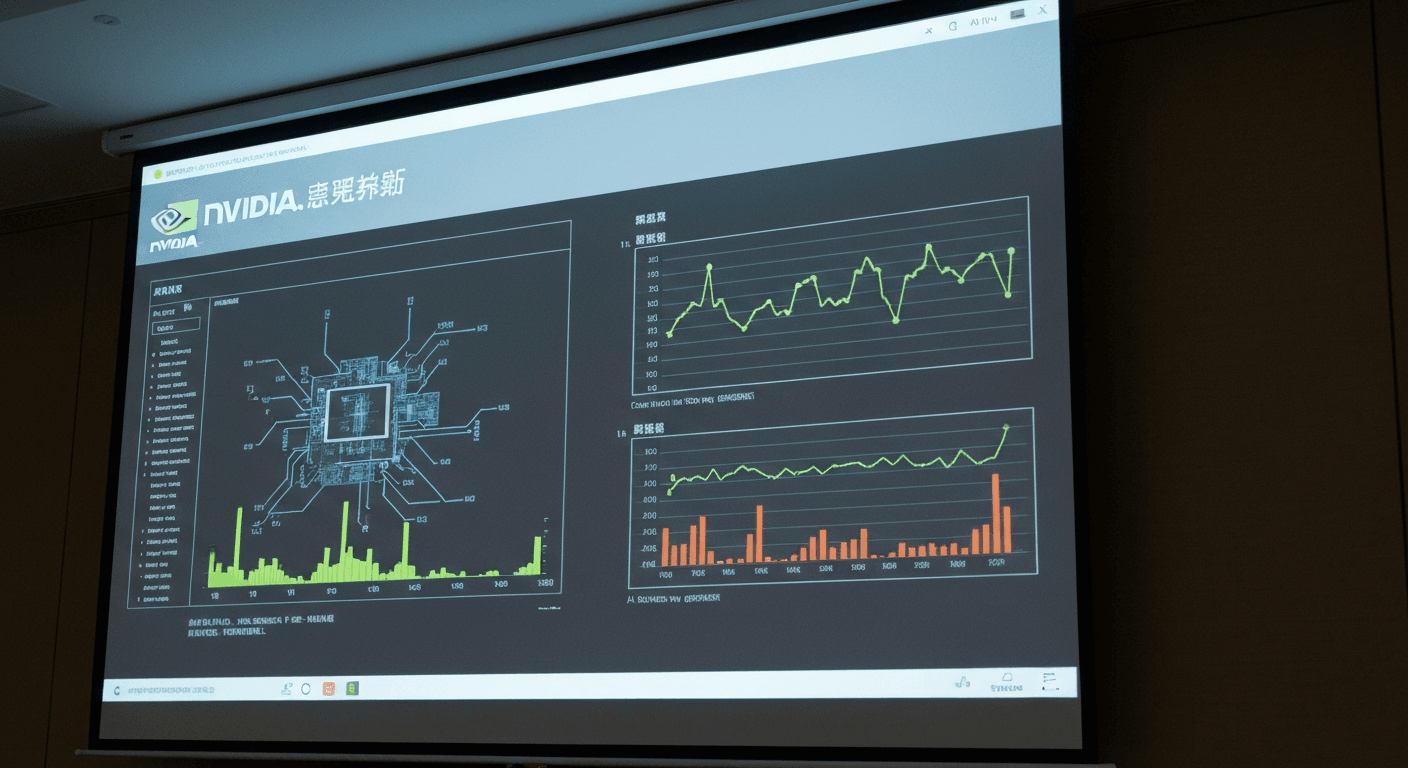

The push towards agentic AI in banking is part of a broader trend of digital transformation and a race for competitive advantage. Banks are under immense pressure to reduce costs, improve efficiency, and enhance customer experience. AI, particularly agentic AI, is seen as a key enabler of these goals. Major players like JPMorgan Chase, Bank of America, and Goldman Sachs are heavily investing in AI research and development, seeking to deploy AI-powered solutions across various business lines.

However, this race for AI dominance is creating a precarious situation. The focus on rapid deployment often overshadows the need for robust security and governance frameworks. Many banks are prioritizing functionality over security, leaving them vulnerable to attacks. Furthermore, the shortage of skilled AI professionals, particularly those with expertise in cybersecurity and data governance, is exacerbating the problem.

Compared to more agile fintech companies, traditional banks often face greater challenges in adopting and securing agentic AI. Fintech firms, unencumbered by legacy systems and bureaucratic processes, can iterate faster and implement more innovative security solutions. However, even fintech companies are not immune to the risks associated with agentic AI. A recent report by the Financial Stability Board (FSB) highlighted the potential systemic risks posed by the increasing reliance on AI in the financial sector, emphasizing the need for international cooperation and regulatory oversight. The FSB report directly calls out the need for "explainable AI" and robust validation processes to mitigate unintended consequences.

Why This Matters for Professionals: Practical Impact and Action Items

The rise of agentic AI has profound implications for accounting professionals, CFOs, and fintech practitioners working in the banking sector. These professionals are at the forefront of managing financial risk, ensuring regulatory compliance, and implementing new technologies. They need to understand the specific challenges and opportunities presented by agentic AI and take proactive steps to address them.

Here are some practical considerations and action items:

- 加强安全审计: Accountants and auditors need to incorporate AI-specific security audits into their risk assessment frameworks. This includes evaluating the security of AI training data, algorithms, and infrastructure, as well as assessing the effectiveness of AI-powered security tools. They should refer to guidelines from organizations like the AICPA and ISACA on auditing AI systems.

- Data Governance Frameworks: CFOs and finance leaders need to establish comprehensive data governance frameworks that address data quality, data privacy, and data security. This includes defining clear roles and responsibilities for data management, implementing data lineage tracking, and ensuring compliance with relevant regulations like GDPR and CCPA.

- AI Ethics and Transparency: Fintech practitioners and developers need to prioritize AI ethics and transparency in the design and deployment of agentic AI systems. This includes using explainable AI (XAI) techniques to make AI decisions more transparent, implementing bias detection and mitigation algorithms, and establishing mechanisms for human oversight and intervention.

- Regulatory Compliance: All professionals must stay abreast of evolving regulations related to AI in finance. Regulators like the SEC and the OCC are increasingly focusing on AI risk management and governance. Banks need to proactively engage with regulators and adapt their AI strategies to comply with new requirements.

- Upskilling and Training: Banks need to invest in upskilling and training programs to equip their workforce with the skills needed to manage and secure AI systems. This includes training in areas like AI cybersecurity, data science, and AI ethics.

The Bottom Line: Forward-Looking Analysis

The integration of agentic AI into banking represents a double-edged sword. While it offers the potential for significant gains in efficiency and customer service, it also exposes critical vulnerabilities in security, data governance, and decision-making protocols. Banks must prioritize addressing these vulnerabilities by implementing robust security measures, establishing comprehensive data governance frameworks, and promoting AI ethics and transparency. The failure to do so could lead to catastrophic consequences, including data breaches, financial losses, regulatory penalties, and reputational damage. The path forward requires a collaborative effort between banks, regulators, and technology providers to ensure that AI is deployed responsibly and securely. The long-term success of agentic AI in banking hinges on building a foundation of trust and security, mitigating the risks while maximizing the benefits.

Fintech.News Desk

Editorial TeamThe Fintech.News Desk covers the latest developments in fintech, accounting technology, tax regulation, and AI in finance. We combine AI-assisted research with editorial review to deliver analytical news coverage for finance professionals.

Enjoyed this article?

Get stories like this first on our Telegram channel. Subscribed by thousands of fintech leaders.

Join us on TelegramRead Next

AI Is Cracking Open Banking Before Quantum Gets the Chance

AI vs Quantum in Open Banking security: Discover how AI is revolutionizing cybersecurity for fintech & accounting, addressing threats before quantum computing.

Banks Face Complex Cyber Risks From Anthropic’s Mythos

Anthropic's Mythos AI poses complex cyber risks for banks. Learn how this tech impacts fraud, security, & compliance in fintech. Stay ahead of threats.

OpenAI has bought AI personal finance startup Hiro

OpenAI acquires Hiro! Explore the implications of this AI personal finance startup acquisition for fintech, accounting, and personalized financial advice.

How AI Is Rewriting Credit Decisioning in Real Time

AI is revolutionizing credit decisions! Learn how real-time data & AI algorithms are replacing static scorecards for faster, smarter risk assessment.

White House Tells Banks to Use Anthropic to Spot Vulnerabilities

White House urges banks like JPMorgan to test Anthropic's Mythos AI for vulnerability detection. Learn how this impacts fintech & accounting.

EY Rolls Out Agentic AI in Assurance Across Its Global Network of Accounting Firms

EY deploys agentic AI for assurance globally. Learn how this tech impacts audit efficiency, risk management, and the future of accounting.