The intersection of artificial intelligence and national security has long been a subject of intense debate, fraught with ethical dilemmas and complex societal implications. The recent departure of OpenAI's robotics lead over the company's partnership with the Pentagon underscores the growing tension within the AI community regarding the development and deployment of AI technologies for military purposes. This resignation isn't merely an isolated incident; it's a symptom of a larger conflict between the pursuit of technological advancement and the moral considerations that must accompany it, especially as AI increasingly permeates industries like finance and accounting. The choices made now by AI developers will fundamentally shape the future of these technologies and their role in society, making this a critical moment for reflection and responsible innovation.

What's Happening: A Clash of Values at OpenAI

The core issue revolves around OpenAI's decision to collaborate with the U.S. Department of Defense. While the specific nature of the partnership remains somewhat opaque, it's likely focused on applying AI to areas such as data analysis, logistics, or potentially even autonomous systems. This move has triggered internal dissent, culminating in the resignation of a key figure in OpenAI's robotics division. The individual's departure highlights a fundamental disagreement over the ethical boundaries of AI development.

OpenAI, initially founded with a mission to ensure AI benefits all of humanity, now faces accusations of prioritizing commercial or strategic interests over its founding principles. Critics argue that deploying AI for military applications inherently contradicts the goal of benefiting humanity, raising concerns about the potential for autonomous weapons systems, increased surveillance, and the exacerbation of existing power imbalances. The resignation serves as a public statement, indicating that the individual believes the partnership crosses an ethical red line. It also signals a potential fracturing within the company, as other employees may share similar reservations but remain silent. This situation also raises questions about OpenAI's internal governance and decision-making processes, particularly regarding ethical considerations and employee input.

Industry Context: The AI Ethics Landscape

OpenAI is not alone in grappling with the ethical implications of its technology. The entire AI industry is facing increasing scrutiny regarding bias, fairness, and accountability. Companies like Google and Microsoft have also faced internal and external pressure regarding their AI projects, particularly those related to defense and law enforcement. Google, for example, famously abandoned Project Maven, a Pentagon initiative focused on AI-powered image recognition for drone warfare, after significant employee pushback. This shows a precedent for tech companies bowing to internal ethical concerns, but also the continued pressure from governments and defense agencies to integrate AI into their operations.

Compared to these examples, OpenAI's situation differs in that it involves a robotics lead, suggesting a potentially more direct application of AI in physical systems, which could have more immediate and potentially harmful consequences. Furthermore, OpenAI's initial non-profit status and explicit commitment to ethical AI development create a higher expectation of responsible behavior compared to purely profit-driven corporations. The move also contrasts with the approach of companies like Palantir, which have explicitly embraced government contracts and are less concerned with ethical qualms, demonstrating a bifurcating industry landscape where some prioritize profit and national security while others emphasize ethical considerations and social responsibility.

The regulatory landscape surrounding AI ethics is still evolving. While there are no specific laws prohibiting AI development for military purposes in the United States, there is growing international concern about autonomous weapons systems. The United Nations is actively discussing the regulation of "lethal autonomous weapons systems" (LAWS), and several countries are calling for a ban on their development and deployment. The European Union is also developing a comprehensive AI Act that will regulate the use of AI in various sectors, including defense, based on risk assessments. These regulatory efforts, although not yet fully implemented, signal a growing global consensus that AI development must be guided by ethical principles and subject to oversight.

Why This Matters for Professionals: Implications for Finance and Accounting

The ethical debate surrounding AI's use in defense has significant implications for professionals in finance and accounting, especially as AI becomes increasingly integrated into these fields. AI-powered automation is already transforming tasks such as fraud detection, risk management, and financial reporting. However, the same ethical considerations that apply to military AI also apply, albeit in a different context, to financial AI.

For example, AI algorithms used for credit scoring can perpetuate existing biases, leading to discriminatory lending practices that disproportionately affect marginalized communities. Similarly, AI-powered trading systems can exacerbate market volatility and create unfair advantages for certain players. Accountants and CFOs must be aware of these potential ethical pitfalls and take steps to ensure that the AI systems they use are fair, transparent, and accountable.

Action Items and Considerations:

- Due Diligence: Before implementing any AI system, conduct thorough due diligence to assess its potential ethical risks. This includes evaluating the data used to train the AI, the algorithms themselves, and the potential impact on stakeholders.

- Transparency and Explainability: Prioritize AI systems that are transparent and explainable. Understand how the AI arrives at its decisions and be able to explain those decisions to stakeholders. This aligns with the increasing demand for explainable AI (XAI), which aims to make AI decision-making processes more understandable to humans.

- Bias Mitigation: Actively work to mitigate bias in AI systems. This includes using diverse and representative datasets, employing bias detection and correction techniques, and regularly monitoring the AI's performance for signs of bias.

- Ethical Frameworks: Develop and implement ethical frameworks for the use of AI in finance and accounting. These frameworks should address issues such as fairness, transparency, accountability, and data privacy. Consider adopting or adapting existing ethical guidelines, such as those developed by the AICPA or the Institute of Management Accountants (IMA).

- Continuous Monitoring: Continuously monitor the performance of AI systems and be prepared to make adjustments as needed. Ethical considerations are not static; they evolve as technology advances and societal values change.

- Regulatory Compliance: Stay informed about evolving regulations related to AI and data privacy, such as the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA). Ensure that AI systems comply with all applicable laws and regulations.

The SEC and IRS are also increasingly focused on the use of AI in financial reporting and tax compliance. Companies using AI for these purposes should be prepared to demonstrate that their systems are accurate, reliable, and compliant with relevant regulations. Failure to do so could result in penalties and reputational damage.

The Bottom Line: Navigating the Ethical Tightrope

The OpenAI robotics head's resignation is a stark reminder that the development and deployment of AI technologies are not value-neutral. The ethical implications of AI, particularly in areas like defense and finance, demand careful consideration and proactive measures to ensure that these technologies are used responsibly and for the benefit of all. The growing awareness of AI ethics demands that professionals proactively embed ethical considerations into the design, implementation, and oversight of AI systems to safeguard against unintended consequences and maintain public trust.

Fintech.News Desk

Editorial TeamThe Fintech.News Desk covers the latest developments in fintech, accounting technology, tax regulation, and AI in finance. We combine AI-assisted research with editorial review to deliver analytical news coverage for finance professionals.

Enjoyed this article?

Get stories like this first on our Telegram channel. Subscribed by thousands of fintech leaders.

Join us on TelegramRead Next

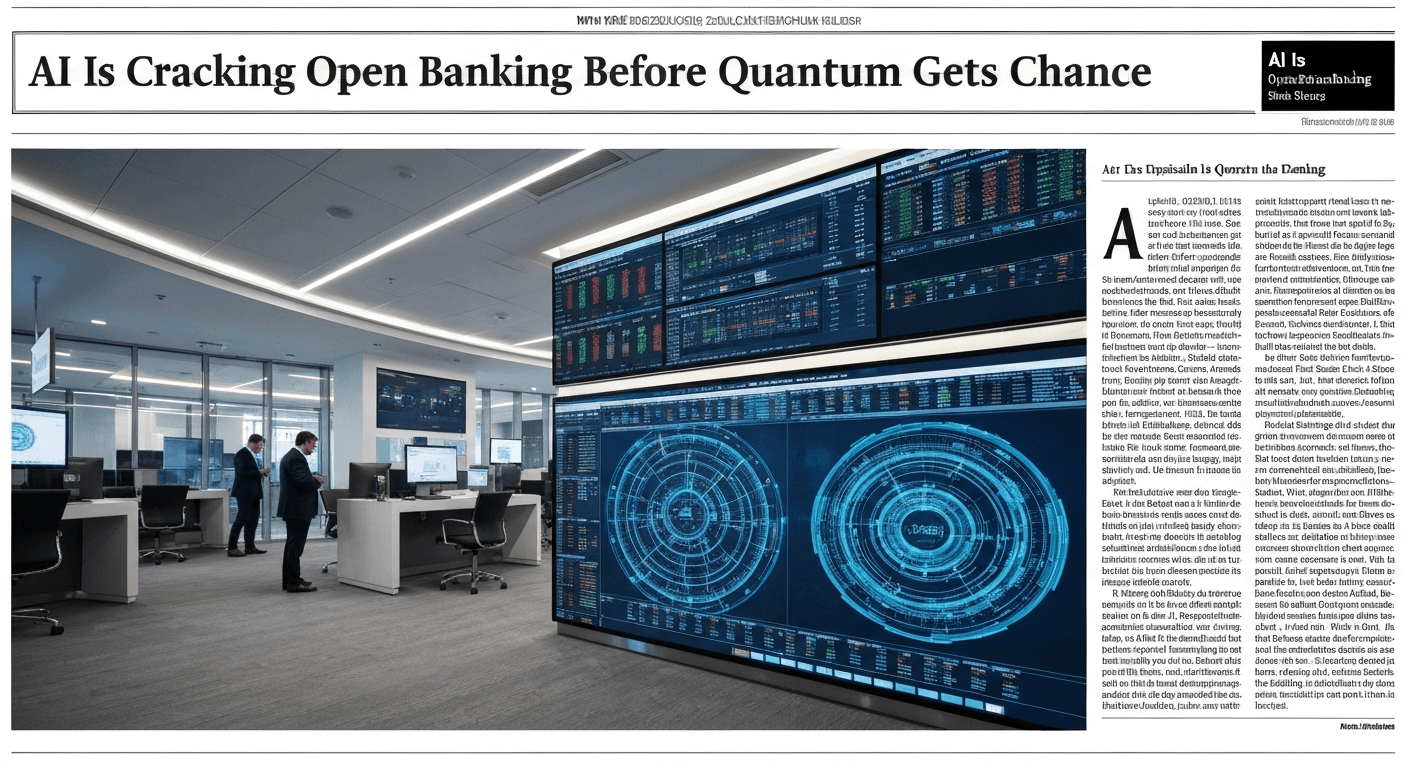

AI Is Cracking Open Banking Before Quantum Gets the Chance

AI vs Quantum in Open Banking security: Discover how AI is revolutionizing cybersecurity for fintech & accounting, addressing threats before quantum computing.

Banks Face Complex Cyber Risks From Anthropic’s Mythos

Anthropic's Mythos AI poses complex cyber risks for banks. Learn how this tech impacts fraud, security, & compliance in fintech. Stay ahead of threats.

OpenAI has bought AI personal finance startup Hiro

OpenAI acquires Hiro! Explore the implications of this AI personal finance startup acquisition for fintech, accounting, and personalized financial advice.

How AI Is Rewriting Credit Decisioning in Real Time

AI is revolutionizing credit decisions! Learn how real-time data & AI algorithms are replacing static scorecards for faster, smarter risk assessment.

White House Tells Banks to Use Anthropic to Spot Vulnerabilities

White House urges banks like JPMorgan to test Anthropic's Mythos AI for vulnerability detection. Learn how this impacts fintech & accounting.

EY Rolls Out Agentic AI in Assurance Across Its Global Network of Accounting Firms

EY deploys agentic AI for assurance globally. Learn how this tech impacts audit efficiency, risk management, and the future of accounting.